In this article, we will talk about the Android runtime environment. Notably, we promise to be brief and explain in short ART and Dalvik (DVM) differences in Android.

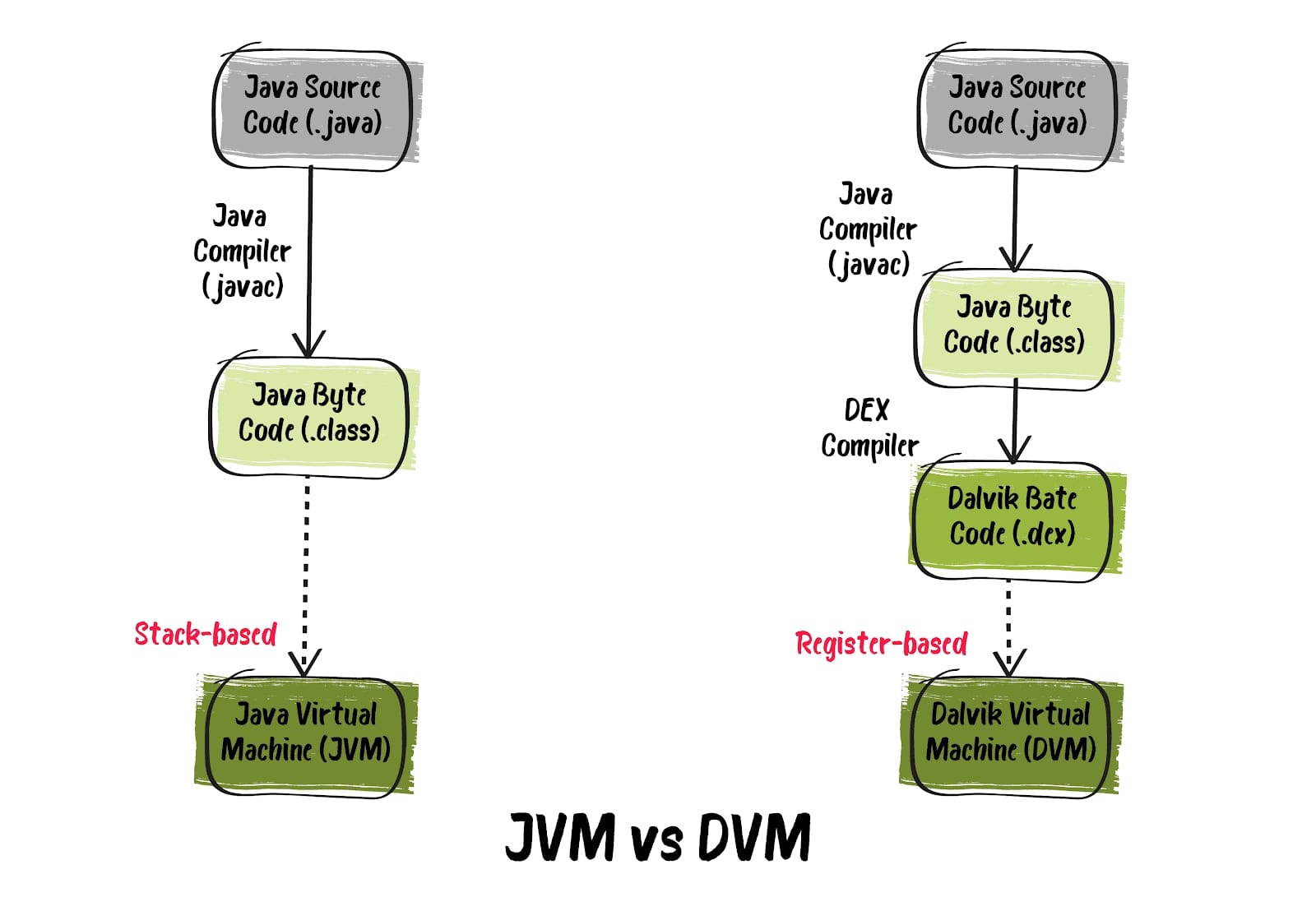

Let us clear up the difference between JVM and DVM first.

Java Virtual Machine is a virtual machine capable of executing Java bytecode regardless of the base platform. It is based on the principle “Write once, run anywhere.” The Java bytecode can run on any machine capable of supporting JVM.

The Java compiler converts .java files into class files (bytecode). The bytecode is passed to JVM, which compiles it into machine code for execution directly on the CPU.

JVM features:

Dalvik Virtual Machine (DVM) is a Java virtual machine developed and written by Dan Bornstein and others as part of the Android mobile platform.

We can say that Dalvik is a runtime for Android operating system components and user applications. Each process is executed in its isolated domain. When a user starts an app (or the operating system launches one of its components), the Dalvik virtual machine kernel (Zygote Dalvik VM) creates a separate, secure process in shared memory, where VM is directly deployed as the Android runtime environment. In short, Android within looks like a set of Dalvik virtual machines, with executing an app in each.

DVM features:

A crucial step in creating an APK is converting the Java bytecode to .dex bytecode for Android Runtime and Android developers to know about it. The dex compiler mainly works “undercover” in routine application development, but it directly affects the application build time, .dex file size, and runtime performance.

.

.

As already mentioned, the .dex file itself contains several classes at once. Repeating strings and other constants, used in multiple .class files, are included only to save space. Java bytecode is also converted to an alternative command set used by DVM. An uncompressed .dex file is usually a few percent smaller than a compressed Java archive (JAR) from the same .class files.

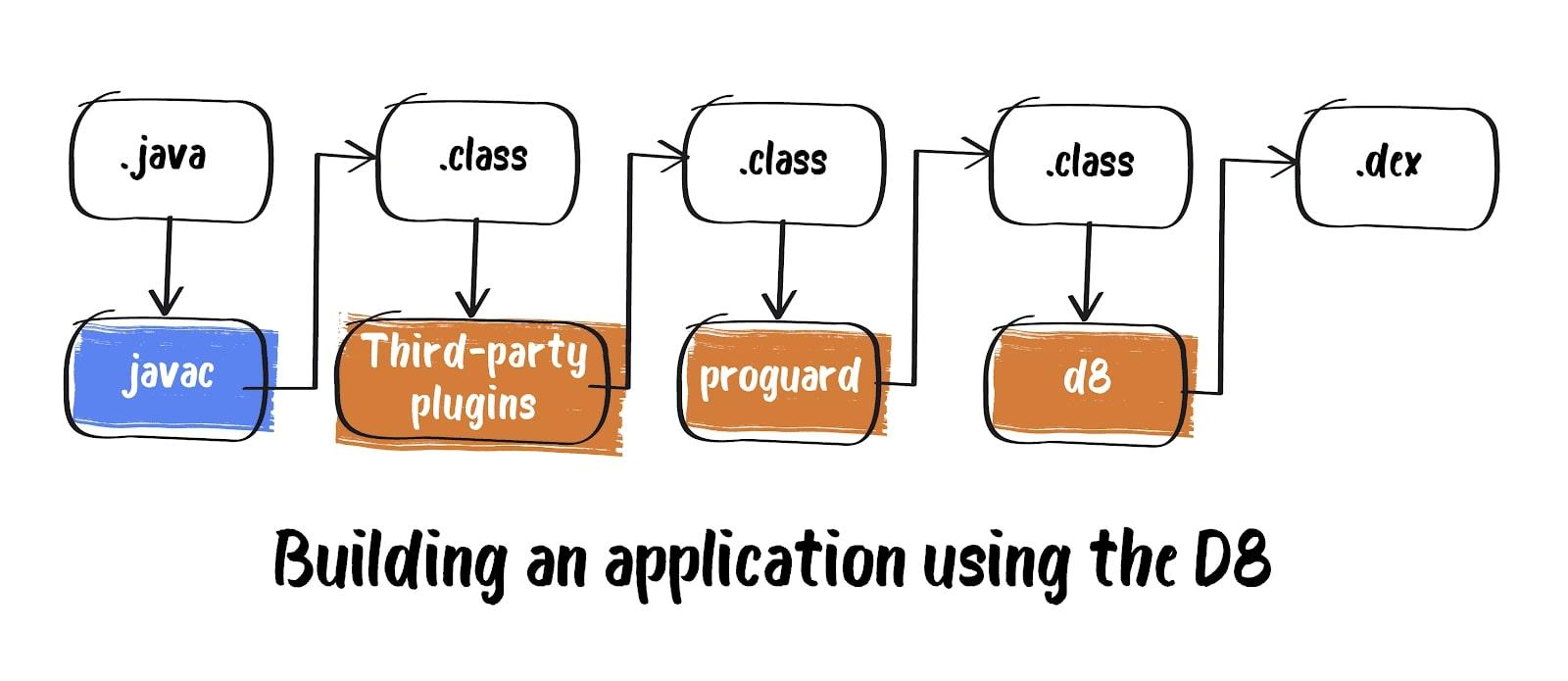

Initially, .class files were converted to .dex using the built-in DX compiler. But starting from Android Studio 3.1 onwards, the default compiler was D8. Compared to the DX compiler, the D8 compiles faster and outputs smaller .dex files, providing high application performance during runtime. The resulting bytecode is minified using an open-source utility ProGuard. As a result, we get the same .dex file, but smaller. Then this file is used for APK building and finally for deploying it on the Android device.

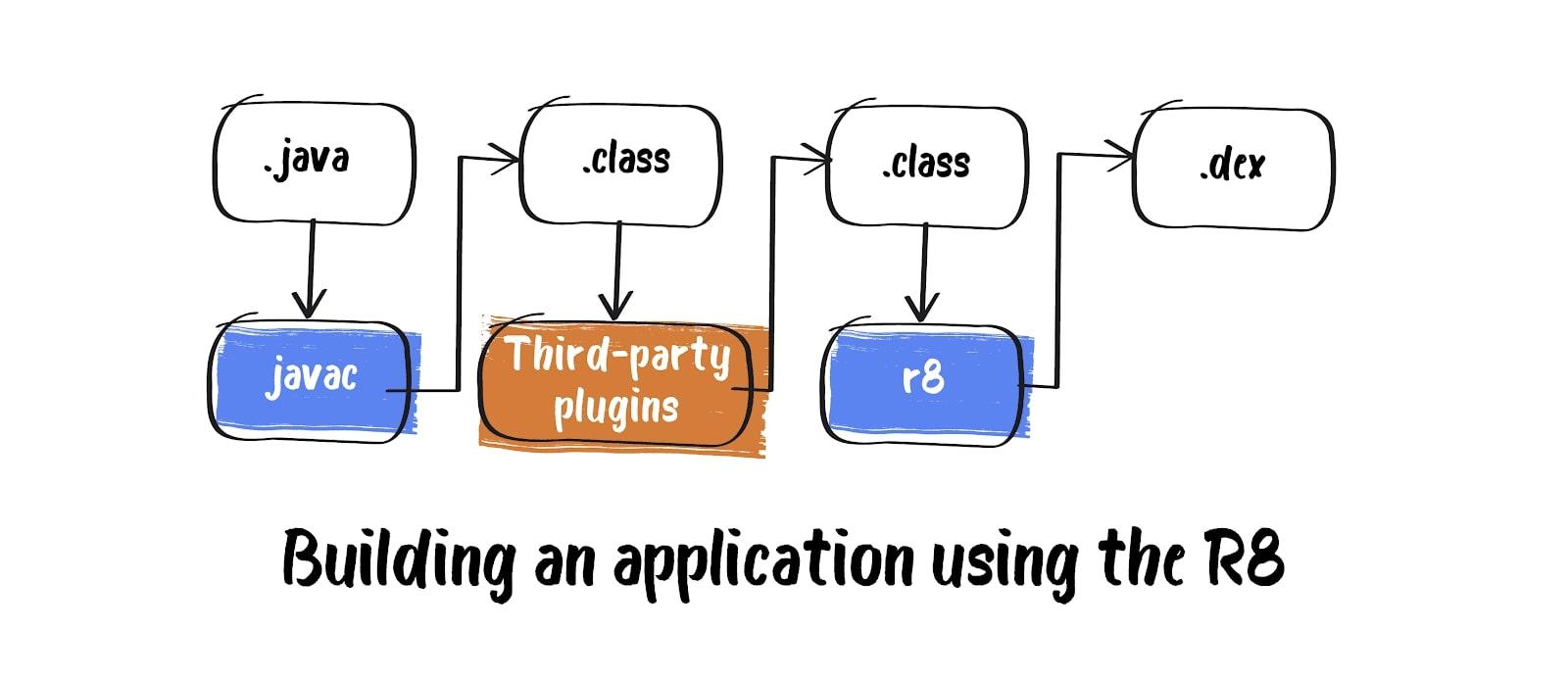

After the D8 in 2018 came R8, which is essentially the same D8, but updated.

When working with Android Studio 3.4 and Android Gradle 3.4.0 plugin or higher, Proguard is no longer used for code optimization during compilation. The plugin works by default with R8 instead, which performs code shrinking, optimization, and obfuscation itself. Although R8 offers only a subset of functions provided by Proguard, it allows the converting Java bytecode to dex bytecode to be performed once, further reducing the build time.

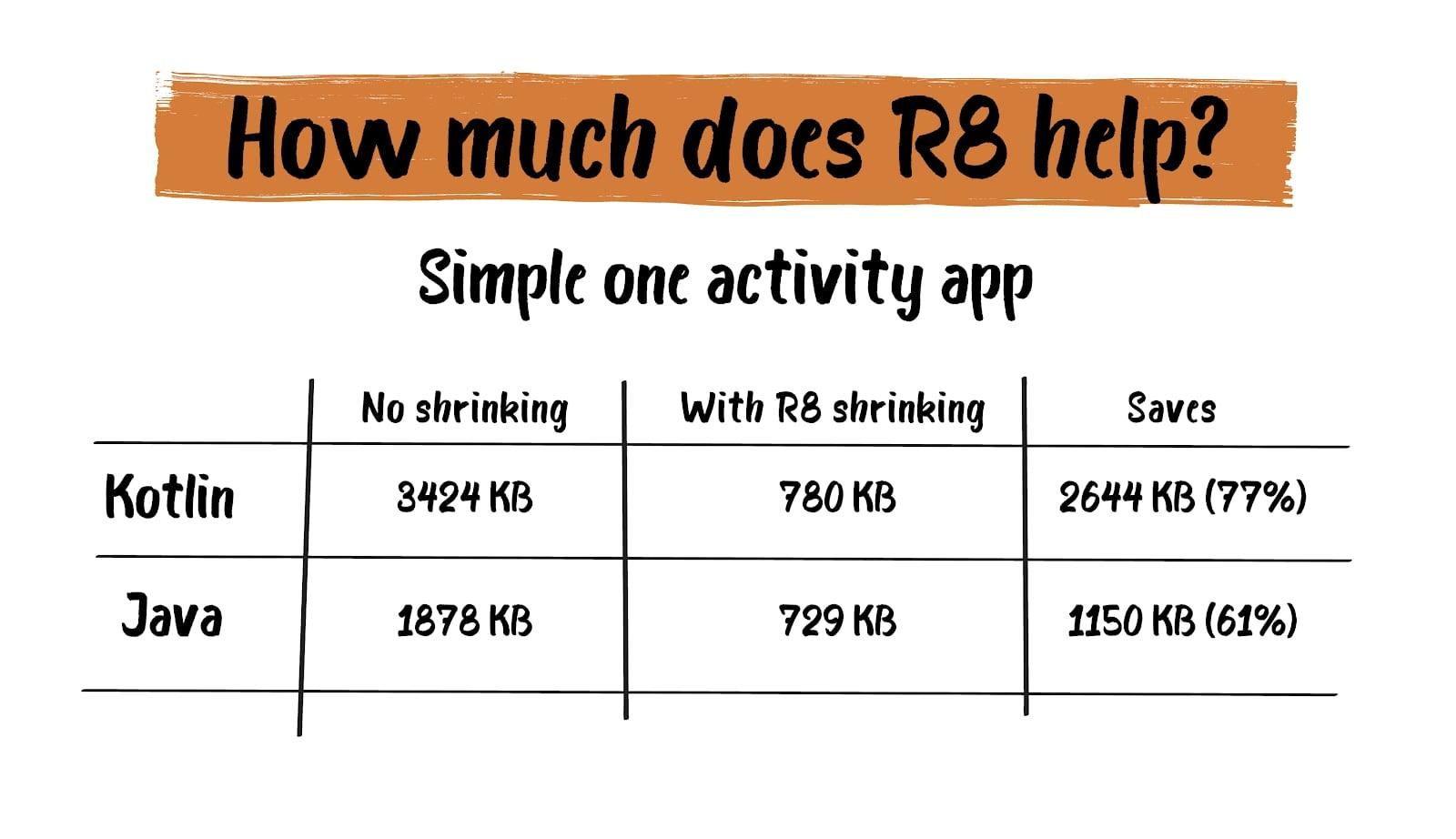

We all know that most applications use third-party libraries such as Guava, Jetpack, Gson, Google Play Services. When we use one of these libraries, often only a small part of each library is used in an application. Without code shrinking, the entire code of the library is stored in your app.

It happens when developers use verbose code to improve readability and maintainability. For example, meaningful variable names and builder pattern can be used to make it easier for others to understand your code. But such patterns usually result in more code than is needed.

In this case, R8 comes to the rescue. It allows you to significantly reduce the application’s size by optimizing the volume of code actually used by the app.

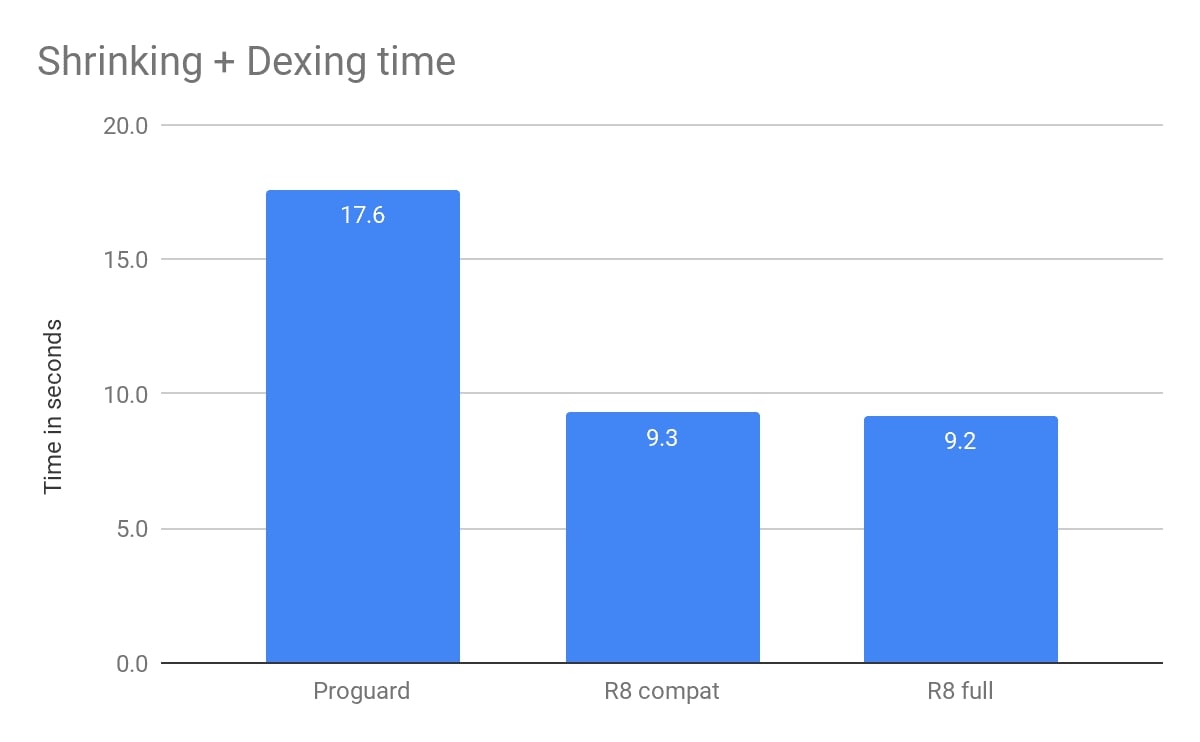

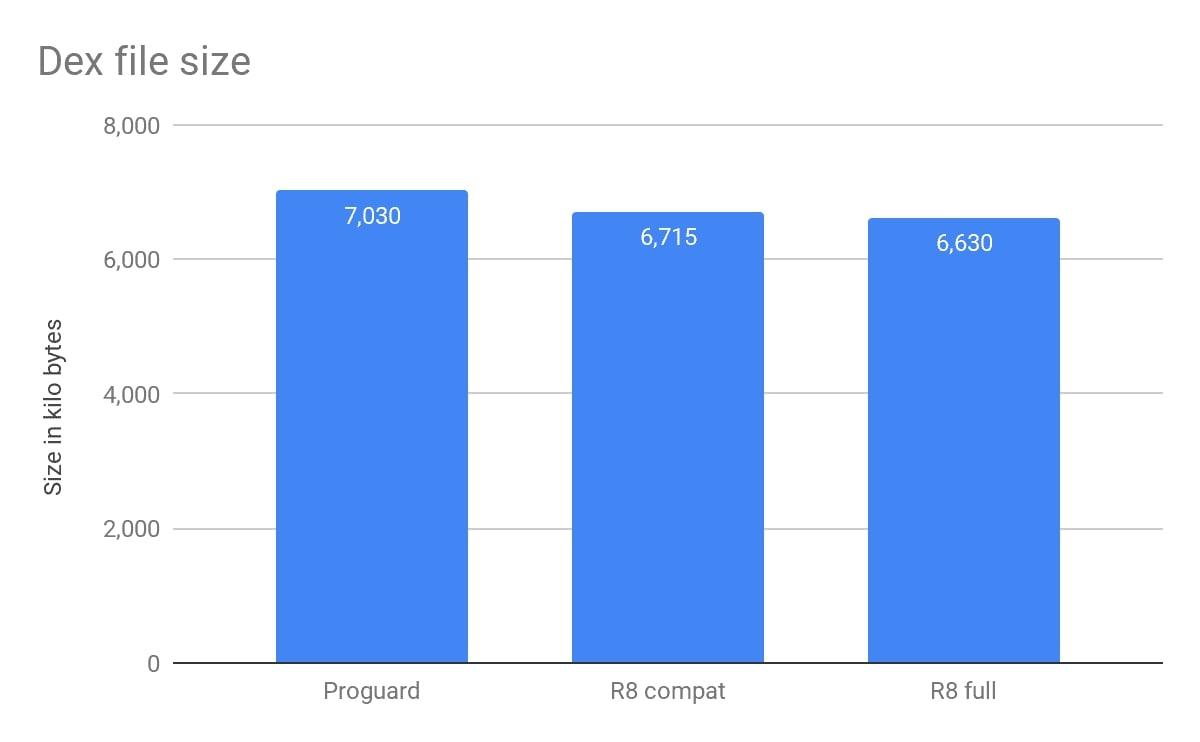

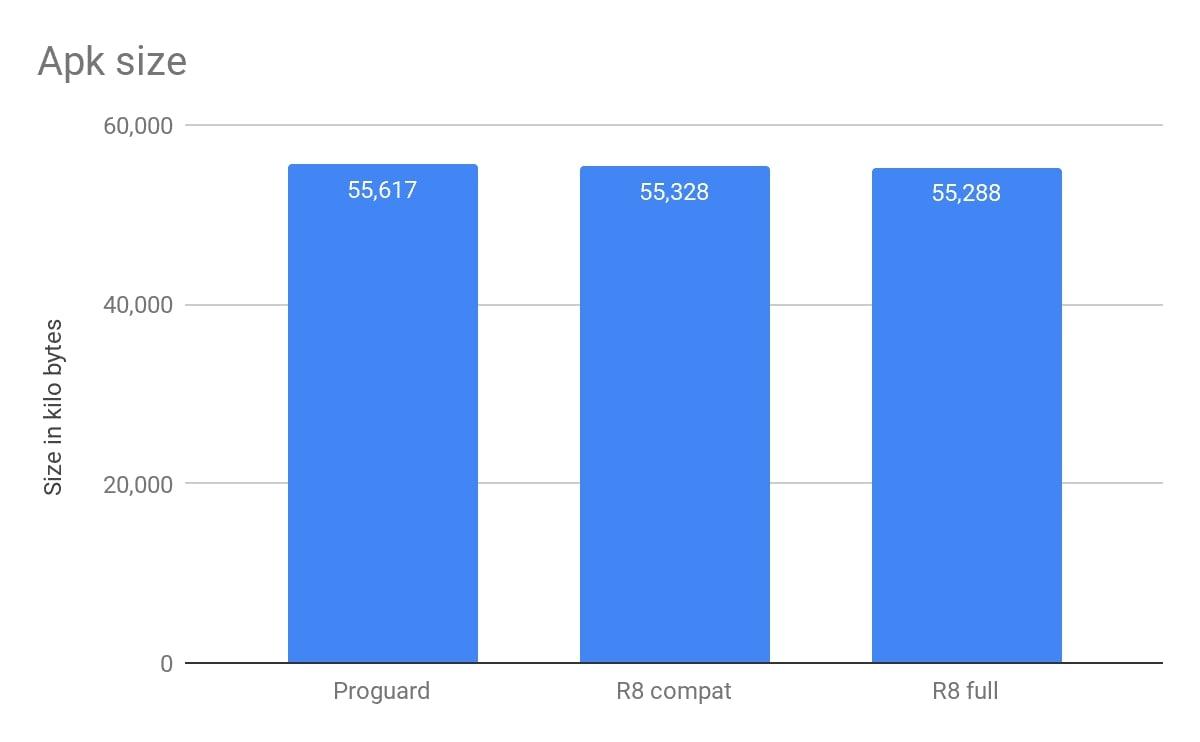

As an example, I will cite figures from the Shrinking Your App with R8 report presented at the Android Dev Summit ’19:

And below you can see the R8 effectiveness when the beta version was presented (taken from the Android Developers Blog source):

For detailed information, check the official documentation and the report mentioned above.

DVM was explicitly designed for mobile devices and was used as a virtual machine to run android apps up until Android 4.4 Kitkat.

Starting from this version, ART was introduced as a runtime environment, and in Android 5.0 (Lollipop), ART completely replaced Dalvik.

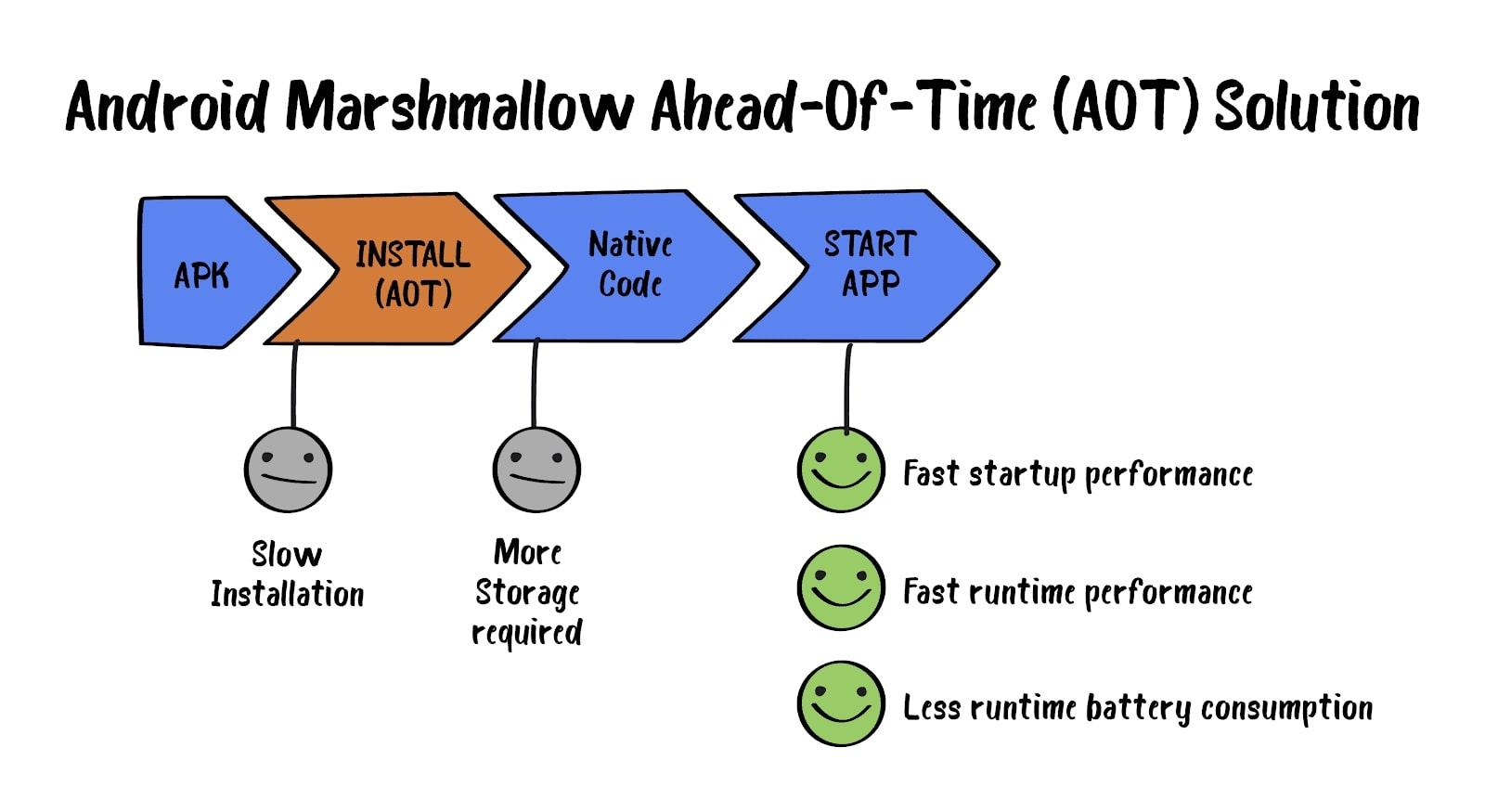

The main visible difference between ART and DVM is that ART uses AOT compilation, while DVM – JIT compilation. Not so long ago, ART started using a hybrid of AOT and JIT. We’ll take a look at this a little bit further.

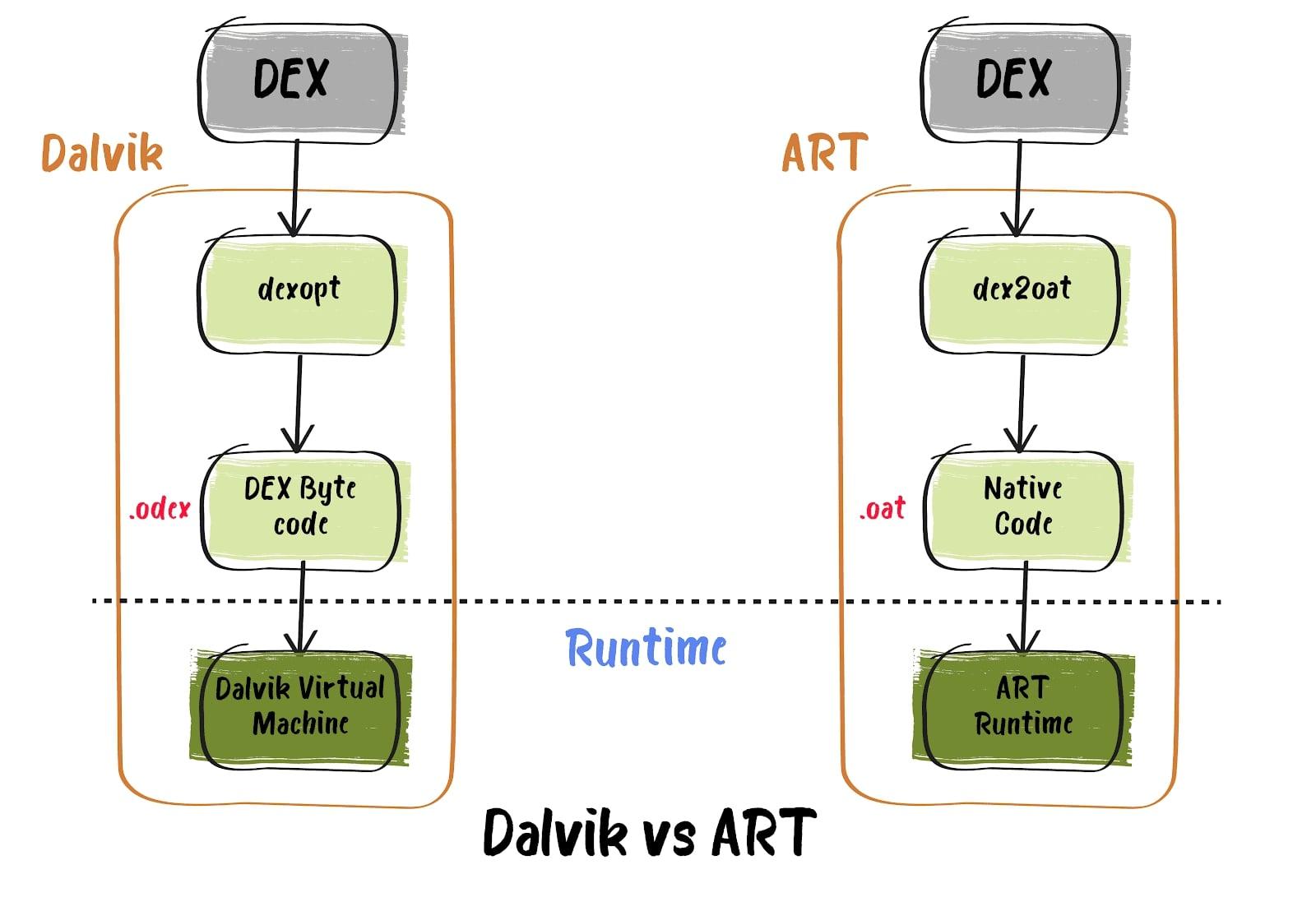

DVM

ART

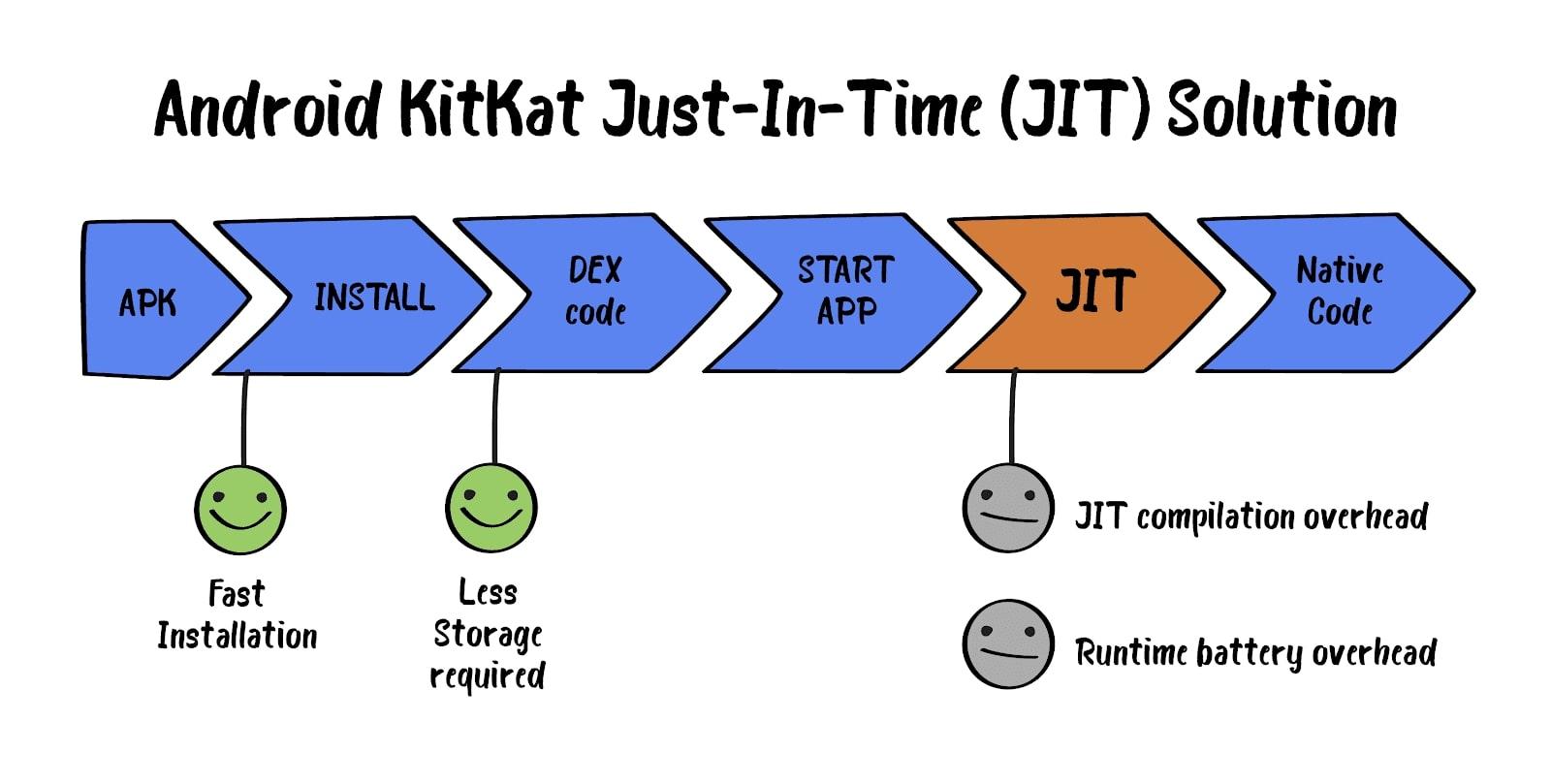

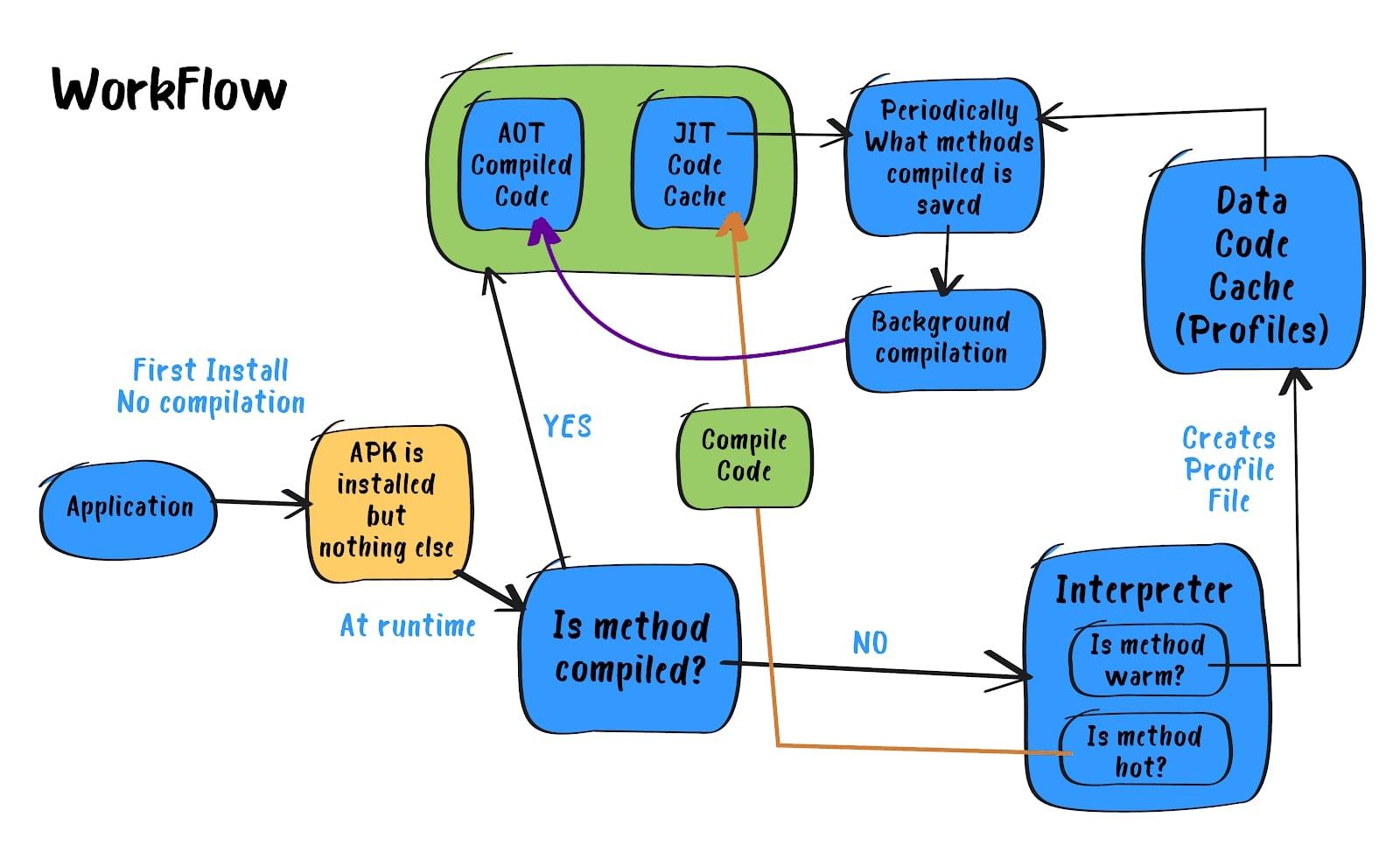

Since Android 7, the Android Runtime Environment includes a JIT compiler with code profiling. The JIT compiler complements the AOT compiler, improves runtime performance, saves disk space, and accelerates app and system updates.

It’s carried out according to the following scheme:

Instead of running the AOT compilation of each application during the installation, it runs the application under a VM using the JIT compiler (almost the same as in Android < 5.0) but keeping track of pieces of app code executing most often. This information is after used for the AOT compilation of these code fragments. The last operation is performed only during the smartphone is inactive, which is on a charge.

Merely speaking, now two different approaches work together, which brings its benefits:

More information about JIT compiler implementation in ART you can find here.

In this article, we have analyzed the main differences between DVM and ART, and generally looked at how Android improved its development tools over time.

ART is still under development: new features are being added to improve the experience for both users and developers.

We hope this article will be helpful for those who are just getting started with Android.